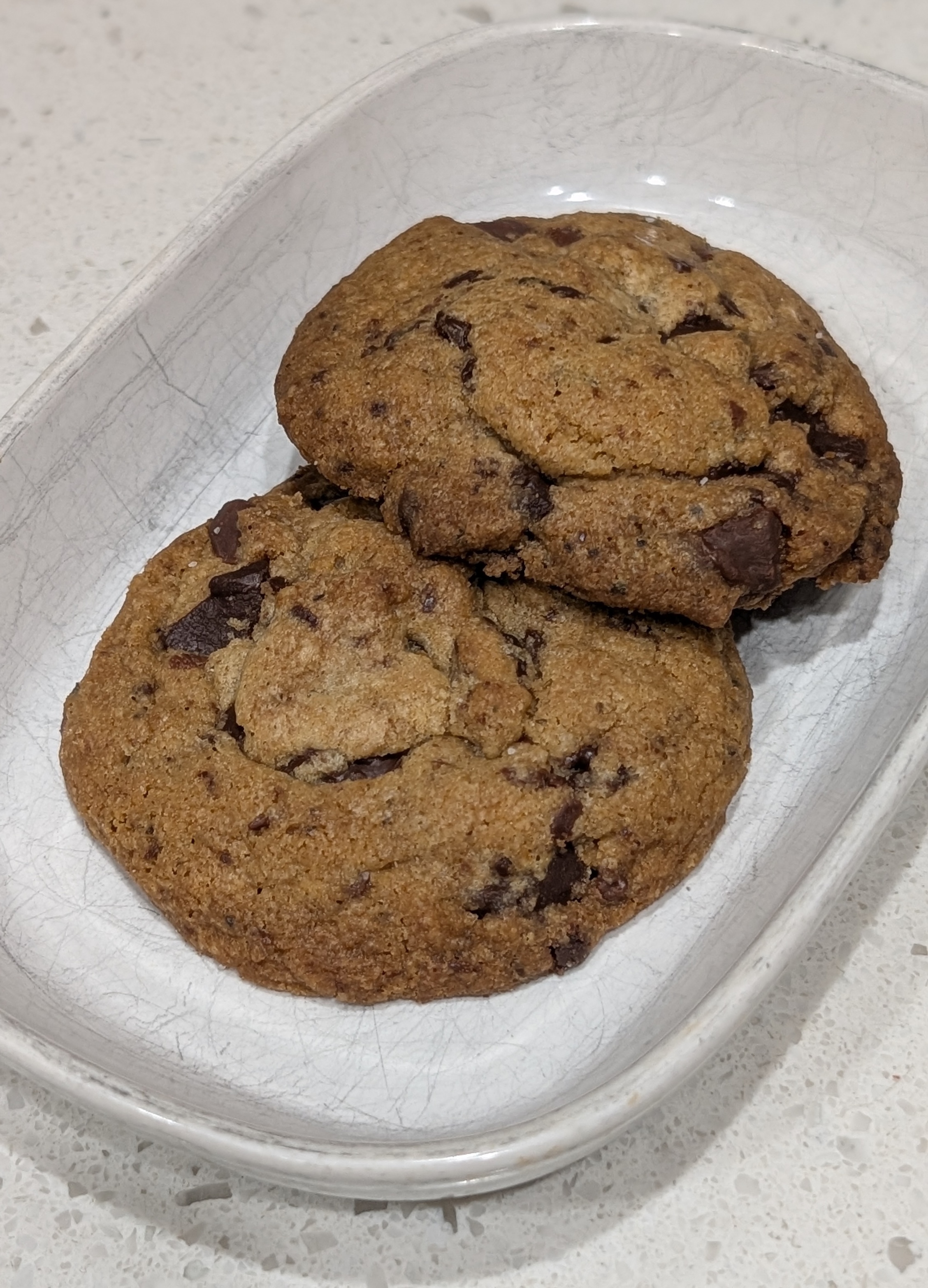

Brown Butter Chocolate Chip Cookies

08 May 2026

Category: Desserts · Cookies

Recipe: NYT — Brown Butter Chocolate Chip Cookies

Rating: 4 / 5 · Make again: Yes · Made by: Jamel

Brown butter and bigger chocolate chunks make these stand out — crunchy edges, soft chewy center, with a burst of coarse salt in every bite.